How to render conversation analysis style transcriptions in LaTeX

UPDATE: I’ve now found there is a better way to do this, which I’ve documented here.

A large part of my research is going to involve conversation analysis, which has a rather beautiful transcription style developed by the late Gail Jefferson to indicate pauses, overlaps, and prosodic features of speech in text.

There are a few LaTeX packages out there for transcription, notably Gareth Walker’s ‘convtran’ latex styles. However, they’re not specifically developed for CA-style transcription, and don’t feel flexible enough for the idiosyncracies of many CA practitioners.

So, without knowing a great deal about LaTeX (or CA for that matter), I spent some time working through a transcript from Pomerantz, A. (1984). Agreeing and disagreeing with assessments: Some features of preferred/dispreferred turn shapes. In J. M. Atkinson & J. Heritage (Eds.), Structures of social action: Studies in Conversation Analysis (pp. 57-102). Cambridge: Cambridge University Press).

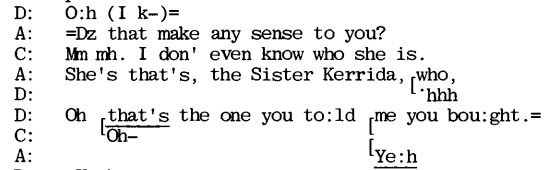

Here’s a image version from page 78:

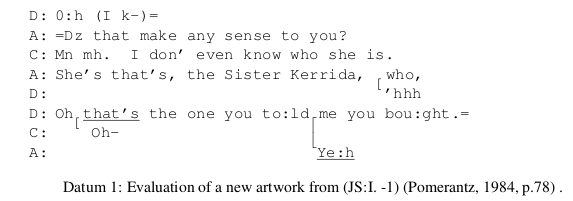

Here’s how I figured that in LaTeX:

\begin{table*}[!ht]

\hfill{}

\texttt{

\begin{tabular}{@{}p{2mm}p{2mm}p{150mm}@{}}

& D: & 0:h (I k-)= \\

& A: & =Dz that make any sense to you? \\

& C: & Mn mh. I don' even know who she is. \\

& A: & She's that's, the Sister Kerrida, \hspace{.3mm} who, \\

& D: & \hspace{76mm}\raisebox{0pt}[0pt][0pt]{ \raisebox{2.5mm}{[}}'hhh \\

& D: & Oh \underline{that's} the one you to:ld me you bou:ght.= \\

& C: & \hspace{2mm}\raisebox{0pt}[0pt][0pt]{ \raisebox{2.5mm}{[}} Oh-- \hspace{42mm}\raisebox{0pt}[0pt][0pt]{ \raisebox{2mm}{\lceil}} \\

& A: & \hspace{60.2mm}\raisebox{0pt}[0pt][0pt]{ \raisebox{3.1mm}{\lfloor}}\underline{Ye:h} \\

\end{tabular}

\hfill{}

}

\caption{ Evaluation of a new artwork from (JS:I. -1) \cite[p.78]{Pomerantz1984} .}

\label{ohprefix}

\end{table*}

Here’s the result, which I think is perfectly adequate for my needs, and now I know how to do it, shouldn’t take too long to replicate for other transcriptions:

I had to make a few changes to the document environment to get this to work, including:

-

\usepackage[T1]{fontenc}to make sure that the double dashes — were intrepreted as a long dash while in the texttt environment.

- I also had to do

\renewcommand{\tablename}{Datum}to rename the “Table” to “Datum” – because I’m only using the table for formatting (shades of html positioning 1990’s style).

-

\usepackage{caption}to suppress caption printing where I wanted the datum printed without a legend (using

\caption*

instead of

\caption

).

The above example is designed to break into a full page centre-positioned spread from a two-column article layout, so those directives are probably not relevant to using it in the flow of text or in two-columns, but I found the (texttt) fixed width font (which, because of the evenly spaced letters, seems to make it easier to read the transcription as a timed movement from left to right) was too large to fit into one column without making it unreadably small.

I hope this is useful to someone. If I find a better way of doing this (with matrices and avm as I’ve been advised), I’ll update this post. Any pointers are also much appreciated as I think I’m going to be doing a lot more of this in the next few years.

There are other horrors in here, and it was a really annoying way to spend a day, but this method seems to get me as far as I need to go right now.

Many thanks to Chris Howes for holding my hand through this.

How to render conversation analysis style transcriptions in LaTeX Read More »