How do people make sense of Tuner Prize nominee Tino Sehgal’s These Associations? And what can cognitive scientists learn from the way they do it?

The result of the Turner prize 2013 has been reported worldwide as a shock win – mostly because this year, the chosen artwork is less shocking than usual.

French artist Laure Prouvost’s madcap films overturned both critical expectations and the bookie’s 6/1 odds against her to win. While William Hill and Ladbrokes had David Shrigley’s mischievous peeing sculptures as a 2/1 favourite, the critics had fancied Tino Sehgal’s live conceptual/performance artworks.

The Turner prize and its contestants have become famous for creating controversy and public discussion about the limits of what artists, galleries and critics consider worthy of aesthetic judgement. However, new research from Queen Mary University of London’s Cognitive Science Group suggests that audiences are generally unfazed by this kind of issue. In ordinary conversations between visitors to the Tate Modern, one of the most supposedly ‘experimental’ artworks in this year’s Turner Prize was immediately and unproblematically subjected to complex processes of aesthetic judgement by the viewing public.

To find out how (and if) people made sense of Tino Sehgal’s Turner Prize-nominated artwork These Associations, I recorded and analysed over two hundred ordinary conversations between visitors to the Tate Modern’s Turbine Hall.

I collected recordings of visitors’ conversations over the duration of Sehgal’s performance piece for which the artist trained 300 participants (including the researcher himself) to engage in a series of coordinated movements on the floor of the 3400m² Turbine Hall.

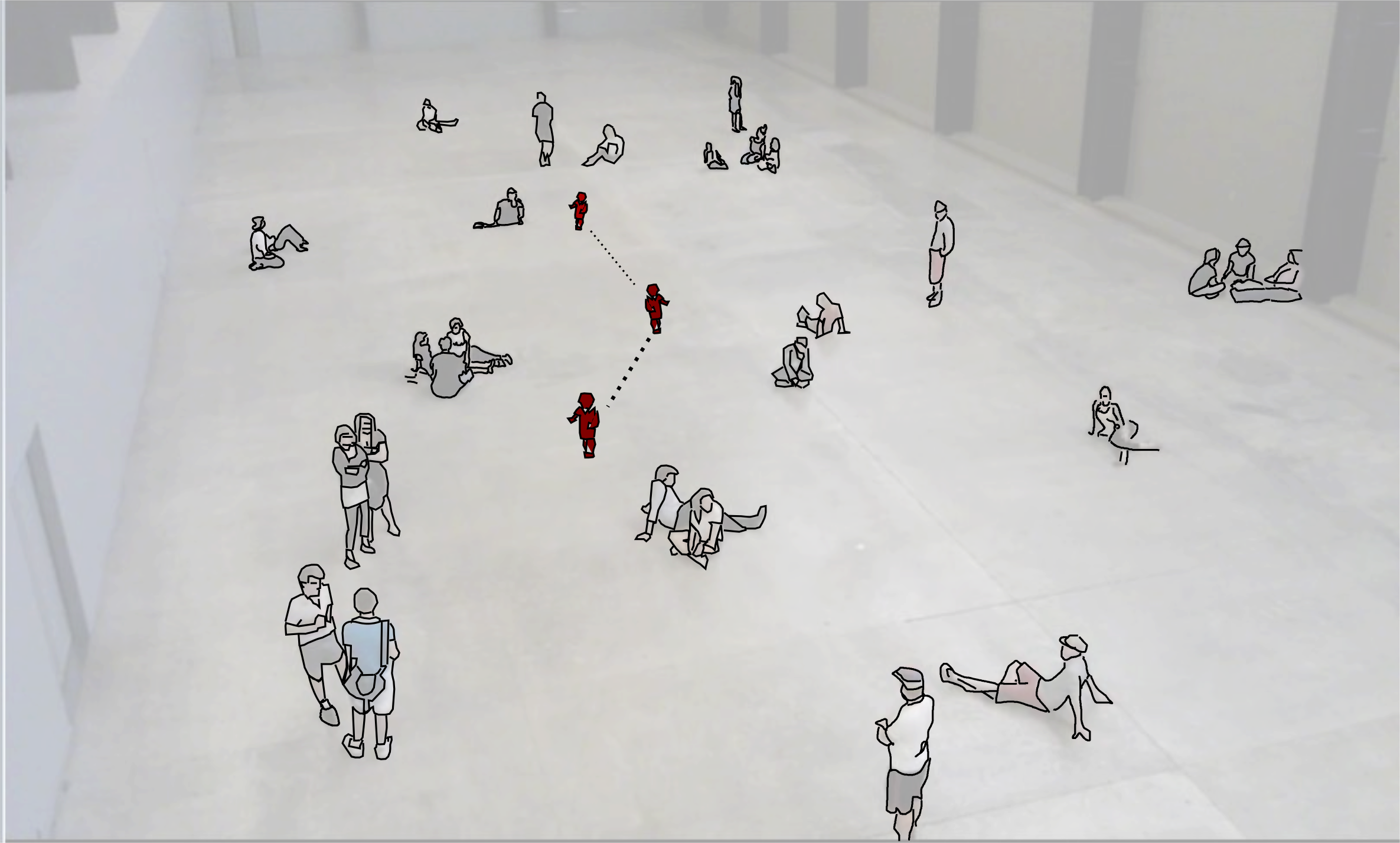

Throughout gallery opening hours in the summer of 2012, up to 70 of these participants at a time would blend into the crowds of tourists, gallery-goers and school children that usually fill the hall. Sometimes Sehgal’s participants would engage visitors in one-to-one conversations, at others they would break out into songs or chants, run in a flocking pattern, or slow-walk through the hall as a large group. For the visitors on the balcony overlooking the hall, this was quite a spectacle, and they would often stand in couples or small groups talking and watching.

Although the But is it art? question is always in the headlines when the Turner Prize is announced, visitors to the Turbine Hall seemed not to care one way or the other. While the question was frequently invoked, and many guests simply assumed that what they were witnessing was an unauthorized and spontaneous ‘flashmob’, most conversations quickly moved on to discussing and describing the action unfolding in front of them—more like sports commentary or a nature documentary voice-over than art criticism.

People’s commentaries were often funny, insightful and playful. “Standing… Standing’s really contemporary right now” was a young American woman’s description of one of Sehgal’s living tableau scenes. “A bit like watching paint dry isn’t it” was one older English woman’s assessment, although she and her friend then discussed what they were observing in detail for half an hour. Several groups of children also learned to play ‘Pooh sticks’ with the piece: as Sehgal’s participants marched under the viewing bridge, they would pick favourites and then run to see whose would walk out first on the other side.

Even negative assessments of the work were then justified in discussion of the details of the piece: how it worked, what it looked like, who the trained participants were and how to tell them apart from ordinary gallery visitors, and what underlying rationale might account for different patterns, behaviours or movements.

Many visitors who arrived on the balcony talking to each other would lapse into long comfortable silences (quite unusual in normal conversation), while others would make ‘oohing’ and ‘aahing’ noises like people watching firework displays. However, both noisy and silent watchers would then explain their reactions to each other in terms of their analysis of the piece. Most striking was how people would seamlessly switch between talking about the artwork and talking about other aspects of their lives: work, music, London, the events of their day, etc., then back to the piece. Often assessments of the artwork were bound up in practical issues about whether to move on or stay watching, what to eat for lunch or what to view next.

The initial findings of this research suggest that seeing something as art—whether good or bad—is an ordinary, everyday social activity. Aesthetic judgements of Sehgal’s work did not come out as individual’s lofty ‘judgements of taste’, but were embedded in people’s everyday social activities. So the humour and skill with which people explained what they were experiencing to one another other was central to their enjoyment of Sehgal’s work – whether or not they categorized it as art.

The study of aesthetics in psychology, neuroscience and artificial intelligence has tended to concentrate on how people’s reactions to formal properties of traditional artistic objects or images, on survey data, or on tests of people’s basic perceptual or cognitive capabilities. These approaches tend to avoid dealing with artworks which—like many that are nominated for the Turner Prize – use non-conventional art forms because they may not be perceived ‘correctly’ as art outside of a gallery context.

But by looking at how people spontaneously explain their own perceptions of new and unfamiliar art forms to each other while in the process of experiencing them, this research explores how judgements of taste constantly adapt to changing social contexts. Finding out how interaction shapes the contexts in which aesthetic judgements ordinarily happen may be key to a more general understanding of how human cognition and perception adapt to constantly changing social situations and norms.