The ‘data session’ has become my favourite research activity since starting to work with ethnomethodology (EM) and conversation analysis (CA). However, this crucial bit of analytic trade-craft seems poorly documented as a research process – with minimal references scattered throughout textbooks, articles and course materials. This post pulls together some of the descriptions and tips I’ve found relating to the practical activity of doing data sessions, followed by a short account of why I am so fond of this wonderful research practice.

Early CA work

I can’t find any direct references to data session practices in any of the early CA literature from Sacks, Schegloff or Jefferson. However, there are some very interesting methodological discussions that provide insight into how data is prepared and collected prior to a principled collection being established in the following two papers:

I can only assume that the trade craft of CA was being established at this time, so the practice of the data session was not yet at a point where it was stable, well understood and ready to be written up for instructional purposes. I’ve heard stories (but can’t find any write-ups) of how Gail Jefferson was particularly involved in its development as a pedagogical/analytic practice. I would be very interested in reading these stories – and particularly learning about any rules / procedures for doing data sessions that may have been established in these early days.

First instructional descriptions

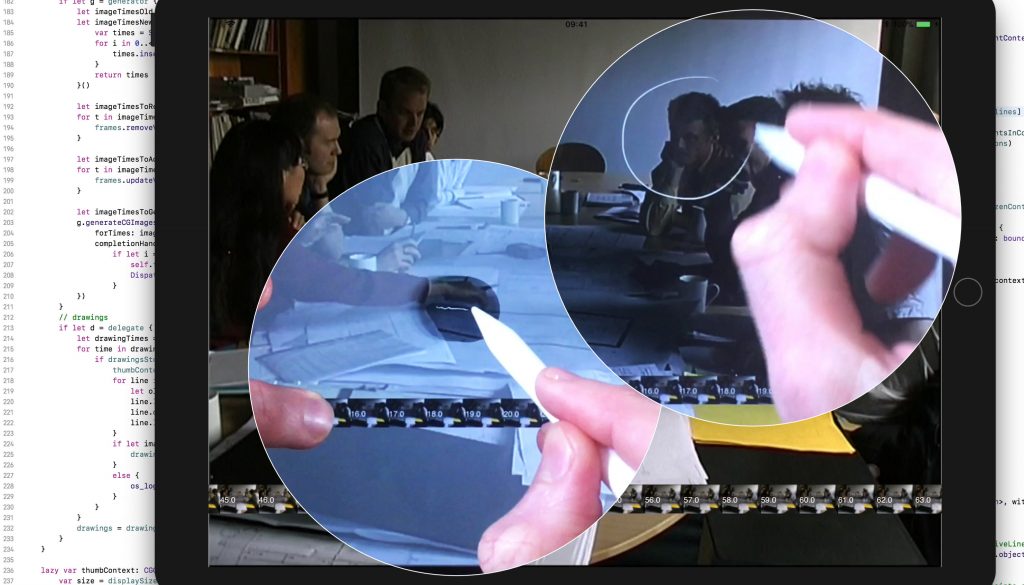

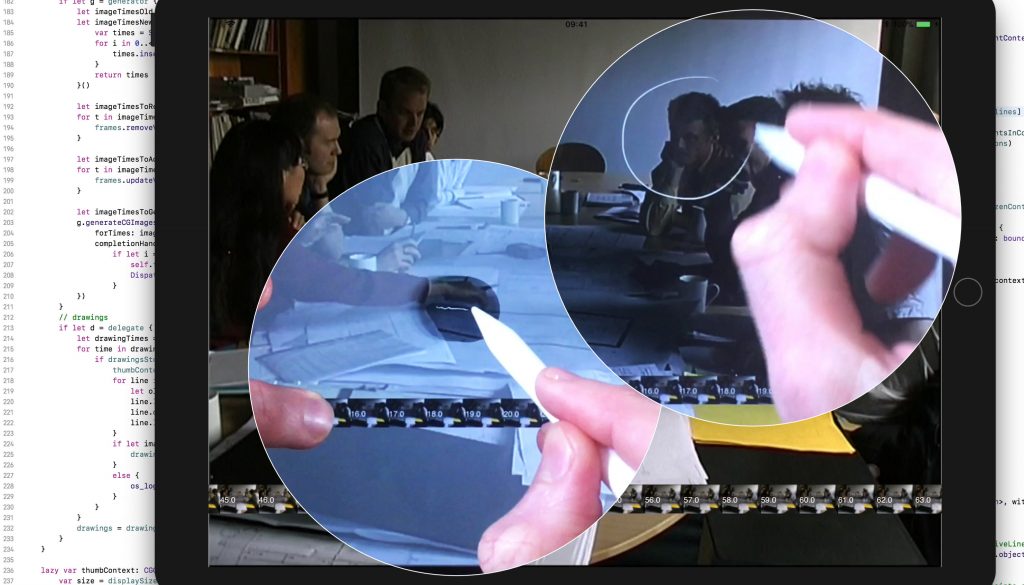

Paul ten Have’s “Doing Conversation Analysis” first published in 1999 provides one of the first instructional descriptions of the data session I can find – and lays out the essentials of what the data session consists of very clearly:

“The data session can be seen both as a kind of playground to mutually inspire one’s understanding of the data, and as an environment that requires a rather specific ‘discipline’. A ‘data session’ is an informal get-together of researchers in order to discuss some ‘data’ – recordings and transcripts. The group may consist of a more or less permanent coalition of people working together on a project or in related projects, or an ad hoc meeting of independent researchers. The basic procedure is that one member brings in the data, for the session as a whole or for a substantial part of it.”

He then provides – as far as I can find – the first description of the actual practical activity in the data session, and how it functions as a pedagogical as well as an analytic practice:

“This often involves playing (a part of) a tape recording and distributing a transcript, or sometimes only giving a transcript. The session starts with a period of seeing/hearing and/or reading the data, sometimes preceded by the provision of some background information by the ‘owner’ of the data. Then the participants are invited to proffer some observations on the data, to select an episode which they find ‘interesting’ for whatever reason, and formulate their understanding, or puzzlement, regarding that episode. Then anyone can come in to react to these remarks, offering alternative, raising doubts, or whatever. What is most important in these discussions is that the participants are, on the one hand, free to bring in anything they like, but, on the other hand, required to ground their observations in the data at hand, although they may also support them with reference to their own data-based findings or those published in the literature. One often gets, then, a kind of mixture, or coming together, of substantial observations, methodological discussions, and also theoretical points. Data sessions are an excellent setting for learning the craft of CA, as when novices, after having mastered some of the basic methodological and theoretical ideas, can participate in data sessions with more experienced CA researchers. I would probably never have become a CA practitioner if I had not had the opportunity to participate in data sessions with Manny Schegloff and Gail Jefferson.”

Have, P. Ten. (2007). Doing conversation analysis: A Practical Guide (2nd ed.). London: Sage Publications. pp. 140-141.

He also mentions that these sessions are poorly documented, writing (in the 1999 and 2007 editions of his book) that he can only find one real description in Jordan & Henderson (1995) quoted below. They also note that the data session – which they call the “Interaction Analysis Laboratory” is both vitally important, and difficult to describe in formal/procedural terms:

“Group work is also essential for incorporating novices because Interaction Analysis is difficult to describe and is best learned by doing. Much in the manner of apprentices, newcomers are gradually socialized into an ongoing community of practice in which they increasingly participate in the work of analysis, theorizing, and constructing appropriate representations of the activities studied.”

They also provide a great description of the actual mechanics of presenting data, and how specific heuristics in the organisation of the data session can mitigate against rambling, ungrounded theoretical speculation:

The tape is played with one person, usually the owner, at the controls. It is stopped whenever a participant finds something worthy of remark. Group members propose observations and hypotheses about the activity on the tape, searching for specific distinguishing practices within a particular domain or for identifiable regularities in the interactions observed. Proposed hypotheses must be of the kind for which the tape in question (or some related tape) could provide confirming or disconfirming evidence. The idea is to ground assertions about what is happening on the tape in the materials at hand. To escape the ever-present temptation to engage in ungrounded speculation, some groups have imposed a rule that a tape cannot be stopped for more than 5 min. This means in practice that rambling group discussions are discouraged and that no single participant can speculate for very long without being called upon to ground her or his argument in the empirical evidence, that is to say, in renewed recourse to the tape.

Jordan, B., & Henderson, A. (1995). Interaction analysis: Foundations and practice. The Journal of the Learning Sciences, 4(1), 39–103.

Recent accounts and empirical work

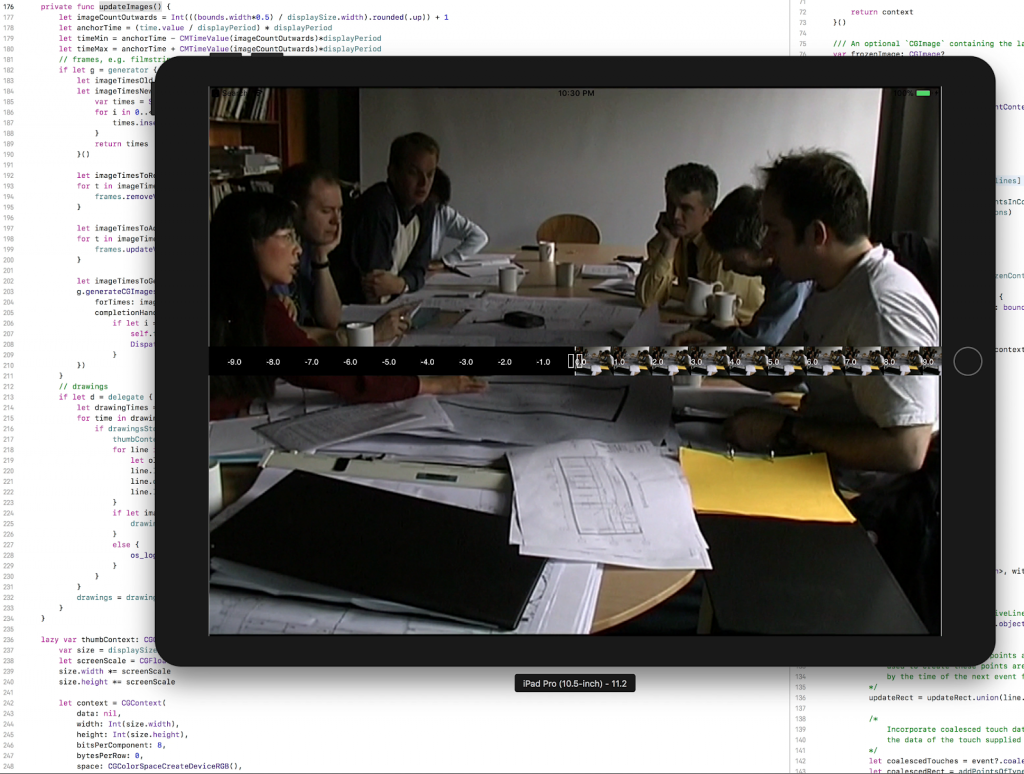

More recent instructional publications have included tips on data sessions. For example, in Heath, Hindmarsh & Luff (2010), there is one tip section, and one appendix on data sessions. They introduce the basic idea, and also mention its pedagogic function, as well as highlighting the opportunity for its use in interdisciplinary / workplace studies that involve practitioners from other fields:

“[Data sessions] can also be used to introduce new members of a team or research group into a particular project and can be very important for training students in video based analysis. On occasions it can also be helpful to have ‘practitioners’, personnel from the research domain, participate in data sessions, as they can provide distinctive insights, and can often help to clarify events that have proved difficult to understand. in data sessions it is important to avoid overwhelming participants with too much material. A small number of brief extracts of, say, no more than 20 seconds or so is fine. it is also helpful to provide transcripts of the talk as well as any other materials that may be useful for understanding the extracts in question.”

They also add a number of key points about the distinct benefits and caveats of running data sessions, paraphrased here:

- identifying candidate phenomena for more detailed study.

- Enforcing evidential demonstration of analytic claims.

- Revealing issues/challenges in demonstrating analytic findings.

- Eliciting alternative/complimentary perspectives.

- Generating new analytic ideas/issues and suggesting improvements for future data collection.

- Keeping one’s ‘hand in’, i.e. practising analysis on other people’s data to maintain a fresh eye/ear for your own research.

Then they say something explicit about the data session as a collaborative practice which I haven’t seen anyone else mention, but it seems absolutely crucial to me. The fact that this almost never comes up also reinforces my sense that EM/CA and its research practices in general are much less fraught by this particular problem than many other research contexts, which speaks extremely well for it as a community and its empirical/epistemic commitments in general:

“Data sessions are a collegial activity and are based on mutual trust. They should be treated as such and discussions of intellectual Property and the like should be avoided. It is up to individual participants to reveal or withhold ideas that they have, if they do or do not want others use those ideas in future analytic work.”

The appendix with more tips on data sessions (pp.156-157) has more very useful practical advice. To paraphrase:

- Limit the numbers to no more than 20 or so.

- Presenters should select 3-6 clips, ideally under 30s each.

- Do bring transcripts – even rough ones are helpful.

- Bring any supplementary material that is relevant/necessary for understanding the action.

- Look at one fragment of data at a time – approximate ratio of 20-30m on each 5s of recording.

- Don’t cheat and look ahead, or rely on analyst’s information exogenous to the clip itself.

- When it’s done, sum up, take notes and get general reflections.

Heath, C., Hindmarsh, J., & Luff, P. (2010). Video in qualitative research: analysing social interaction in everyday life. Sage Publications. pp. 102-103.

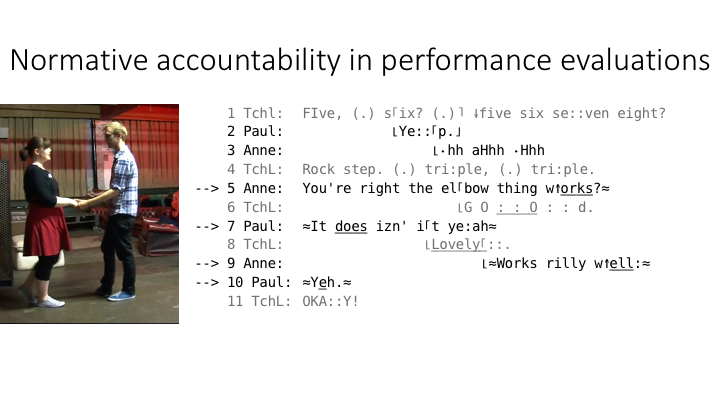

There is a wonderfully reflexive EM/CA analysis of a data session in a chapter by Harris, Theobold, Danby, Reynolds & Rintel (2012) in a volume on postgraduate pedagogical practices that presents the analysis of a data session by the authors and data session participants themselves. They focus on the collaborative / peer pedagogical aspects of the session, and highlight the “fluidity of ownership of ‘noticing'” with reference to clear evidence of how these noticings can be done in this way.

Harris, J., Theobald, M. A., Danby, S. J., Reynolds, E., & Rintel, S. (2012). “What’s going on here?” The pedagogy of a data analysis session. In A. Lee & S. J. Danby (Eds.), Reshaping doctoral education: International Approaches and Pedagogies (pp. 83–96). London: Routledge.

A participant-observer account

Finally, my favourite description of work practices in the data session was written by John Hindmarsh in the affectionate and humorous Festschrift publication he and his colleagues edited for Christian Heath. In an uncharacteristically participant-observer style, he nonetheless describes the detail of both pedagogical and analytic processes of “Heath’s natural habitat: The data session” very vividly. He includes:

- Delicate interrogations: where researchers are subtly probed as to why they selected specific clips.

- Occasioned exclamations: in which the seasoned analyst will hoot with infectious laughter or joy at a clip – infectious partly because it can leave less experienced researchers either shamefully nonplussed or scrambling to find a grounding for the source of the laughter.

- Transcription timings: opportunities to (delicately) rectify transcription errors.

- Re-characterisations: moments where a banal, if well-targeted observation is picked up and re-packaged as an elegant and insightful analysis – a form of agreement with some extra pedagogical/analytic impetus.

- Troubled re-characterisations: same as above, but done as an (initially veiled) disagreement, demonstrating poor targeting or a flawed analysis – again, always analytically useful and instructive, but less pleasantly so.

Hindmarsh, J. (2012). Heath’s natural habitat: The data session. In P. Luff, J. Hindmarsh, D. vom Lehn, & B. Schnettler (Eds.), Work, Interaction and Technology: A Festschrift for Christian Heath. (pp. 21–23). London: Dept. of Management, Kings College London.

Finally – some of my own reflections on the data session – and why it constitutes such an important methodological and pedagogical practice.

Why I love data sessions and why you should too

The last description of the trade craft of a particular researcher’s data session is my favourite because it shows what an excellent apprenticeship situation this is. Whereas instruction in environments where empirical data is less straight-forwardly ready-at-hand, there is a latency between the teaching moment and the understanding moment that is frustratingly difficult to bridge. In this situation, the data is really doing the teaching, but the skilled analyst elicits both the observation and its pedagogical thrust from the same few seconds of interaction that has been in plain sight all along.

Furthermore, this public availability of the data as a mutually assessable resource to the group provides a constant check on authoritative hubris. More than once I’ve seen a junior analyst grasping and holding onto a powerful observation that provides irrefutable counter-evidence to a more experienced analyst’s position on some piece of data. There is honesty and accountability that flows in each direction in the data session, which is what makes it such a wonderful occasion for learning, analysis and – literally – serious fun.

I also like Jon Hindmarsh’s description because it really captures what it’s like to attend data sessions with different people who love the practice. I’m new to it, but thanks to the generosity of my supervisor Pat Healey and his enthusiasm for this work I’ve had the great pleasure of analysing data with pros such as Steven Clayman, Chuck Goodwin, Christian Heath, John Heritage, Yuri Hosoda, Shimako Iwasaki, Celia Kitzinger, Dirk vom Lehn, Gene Lerner, Rose McCabe, Tanya Stivers, Liz Stokoe and Sandy Thompson, not to mention my fellow students in these sessions from whom – given the peer pedagogical structure of the data session – I was able to learn just as much.

My experience has been that everyone approaches data very differently, and each person has a very distinctive style, analytic focus and approach. Nonetheless, the dynamics and epistemic arrangements of the situation allows for an amazingly rich exchange of ideas and empirical observations between disciplines, across interactional contexts, cultures, languages and focal phenomena. I am convinced that it is one of the most crucial factors in how EM/CA projects have made such robust findings in studies of interaction, language and culture, and that there is a great deal more to be understood and appreciated about how they function.

I am also convinced that the data session has a very important place in disseminating EM/CA findings and practices beyond studies that centre onits traditional sociological/anthropological/linguistic contexts of study. There are sure to be ways of adapting some of its pedagogical/analytical dynamism to working with other contexts and types of recorded materials – although it’s debatable whether this form of analysis would really work with anything other than interactional data. In any case, as I mentioned at the beginning – I am very curious about other data session practices and would like to know more about the similarities and differences in how people run theirs, so, I would be very grateful if you would send me your data session experiences/tips/formats and training materials.