Explaining research that uses conversation analysis to people with strong leanings towards quantitative methods is hard. I find they often fixate on a few methodological issues and never get round to discussing the research itself.

Earlier this term I gave an intentionally provocative talk about conversation analysis (CA) and its use in Cognitive Science, to see what kinds of issues came out of the woodwork very strongly. It certainly worked – I got quite a vigorous and enjoyable smackdown from my colleagues, and although I wasn’t very well equipped to deal with it at the time, I got my wish of an enumerated set of these questions that fell into three broad categories.

I took these categories and developed a short presentation entitled “conversation analysis for geeks” that in future I will prepend to my presentations to try and deal with these issues before diving into my research material.

The issues outlined below are interesting ones though, and looking at them in a bit more detail has helped me to understand why it’s important to make the case for interactional analysis, rather than – as I’ve seen done very skilfully by some researchers – try to skirt or minimise these issues in presentations using rhetorically compelling, persuasive but methodologically unchallenging evidence.

Quantification

People in my research environment are very familiar with quantification and statistical significance as ways of explaining human action and cognition, almost to the point of prejudice about other forms of explanation. This is a well established problem, that both Emmanuel Schegloff and Paul ten Have have addressed at length. Unfortunately, I often have 15-20 minutes to present, and doubt I could get people to read these texts beforehand!

I’ve heard some researchers deal with this issue by invoking the word “qualitative” and “ethnography” to explain what they’re doing. However, I think this is an unsatisfying compromise. Calling what I’m doing qualitative ethnography makes it sound like I’m studying social groups and their cultures, whereas I’m studying the ethno-methods they use to articulate and negotiate their groupness and their environment. So this compromise feels unsatisfying in the context I’m working in because this misunderstanding gives rise to others – such as the common complaint that CA obsesses over obvious trivialities. These ethno-methods obviously would seem trivial if the object of study was the sociology of a specific culture as a whole, rather than the generalisable methods of how a culture is articulated through interaction.

I think it’s worth arguing this because there are actually compelling reasons for making strong claims about how these methods are generalisable, and working with quantitative methodologies to demonstrate that. For example, recent developments in CA such as Stivers et. al’s study of cultural variation in turn-taking use statistics very interestingly. Turn-taking, probably the most well-developed conversation analytic device, features as a motivating hypothesis for a statistical study of cultural variations. My sense is that this kind of CA-centric mixed methods collaboration is only possible because the empirical basis of CA is taken seriously as a systematic and formal analytical basis for the project.

Practical vs. theoretical reasoning

Or proof practices vs. logical proofs.

There are a few keywords that are real traps preventing interdisciplinary understanding. Although Cognitive Science is an interdisciplinary field, use of terms like ‘proof’ and ‘reasoning’ seem to trip my presentations up very easily. When I’ve presented sequences of talk as evidence of for analysis, I’ve had complaints that: “that’s evidence of interaction, not reasoning”. The problem seems to be that in Cognitive Science ‘reasoning’ necessarily implies a cognitive view of a mind as an abstract computing system, so ‘reasoning’ in this context is a private process, in the same way that ‘proof’ in Cognitive Science and a CS engineering context denotes formal, logical proof.

Again, not using this language at all, or trying to persuade people by avoiding the issue makes it hard to communicate the relevance of results to the interdisciplinary (but quantitatively/logically oriented) field of Cognitive Science. It may seem obvious that there are methodological differences and tensions between a cognitivist and phenomenological approach to studying human behaviour and action, but my experience of this research context is that methodological issues tend to be skirted for pragmatic, political and professional reasons.

Talking about ‘practical reasoning’ is the best way I’ve found to describe the way participants in conversation analyse the evidence available to them (whatever utterance or action occurred previously) to figure out what might be their relevant next action. Similarly, I’ve tried talking about ‘proof practices’ to describe the way participants in a conversation challenge and cross-check theirs and others’ social actions to establish the accuracy of their analysis. I’m not sure that these work – but again, it seems important to retain these terms to be able to make a claims about cognitive phenomena.

A good example of this is Celia Kitzinger’s article After post-cognitivism in which she writes about the convergence of conversation analysis and discursive psychology as interaction-analytical methods with something to say about cognitive science. As Stivers et. al. actually put into practice in their statistical paper, Kitzinger recommends using strong interactional evidence from CA to guide hypotheses that can form the basis of research with more quantitative methods – or in her case – cognitive models and simulations in computer and cognitive science.

Topical authority

This seems to me to be the strangest issue, perhaps because my training in aesthetics leads me to just assume that people have authority over their own expressions of likes and dislikes. I’m studying how people do these expressions – how they agree and disagree with each other over aesthetic assessments, but I’ve had people complain that if I want to find out about art, I should interview curators or experts instead of listening to what ‘ordinary people’ have to say about it.

I’m not quite sure what to make of this complaint – or how to react to it. It just seems obvious to me that what experts or people involved in aesthetic production say around their objects of professional aesthetic speculation and how they interact around them will be very different from the ways that people talk while walking around galleries or chatting about art and artists at home or at work. I’m interested in the latter – although I’m sure there’s a lot to be learned form both – and their intersections.

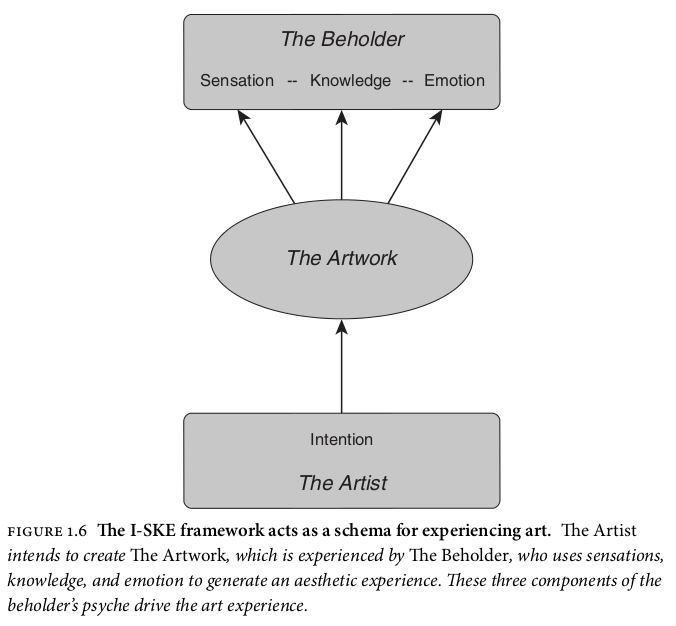

I think this question is informed again by a cognitivist theory of art that I recognise from Cognitive Science approaches to aesthetics, exemplified by this one from the introduction to “Aesthetic Science” by A. Shimamura.

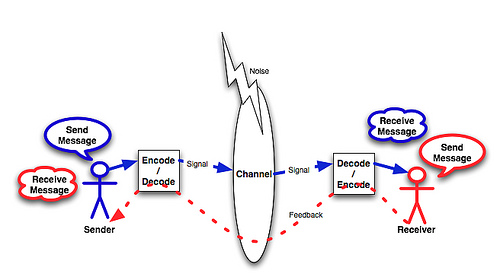

In this model of artistic communication, art is seen as an intentional state in the mind of the artist communicated to the beholder via the artwork: ie. art as (a certain kind of) thought. This amounts to a kind of transmission theory of art based on a familiar idea in communication theory – that a cognate concept is encoded in speech signals then decoded back into a concept in the mind of the hearer.

Informed by this understanding, it makes sense that if you want to find out about art, you go as close to the source – the mind of the artist – as possible, rather than the ‘decode’ side, where, presumably, the viewer or recipient has to contend with errors in ideation produced by the cognitive decoding process as well as any noise that may have been introduced to the signal.

I guess dealing with this issue is the entire subject of my PhD so might be quite a challenge to condense.

Other questions and misunderstandings

Speaking to Jon Hindmarsh at the fantastic summer institute he and his colleagues at King’s College run on video and the analysis of social interaction, he added the following from his experience of using and presenting qualitative video analysis in the context of sociology, anthropology and ethnography.

He said he gets complaints that:

- Video recordings skew people’s natural behaviour

- ‘Fly on the wall’ video recordings are ethically unsound

Perhaps as these fields are closer to the origins of these methodologies in sociology, these issues more familiar to researchers. I’ve not experienced these personally, probably because I haven’t used video in my analysis, and perhaps there’s an assumption that audio recordings will skew people’s behaviour less (not that I’ve noticed it in my recordings or the data I’ve collected from the Audio BNC). I have no idea why fly-on-the wall recordings might be ethically unsound if the data is treated carefully, but it sounds as though the issue may be more ideological than practical.

——-

I’m interested in hearing about other questions and misconceptions from other fields, and what strategies conversation analysis and ethnomethodology researchers are using to deal with them – or skirt around them in ways that don’t compromise their empirical claims.